ETL (Extract, Transform, Load) is a process that involves extracting data from multiple sources, transforming it into a unified format, and then loading it into a target system. ETL is a critical part of data warehousing, business intelligence, and analytics. It enables organizations to extract, clean, consolidate, and store data from multiple sources in a unified data warehouse.

ETL workloads are commonly used in data warehousing and business intelligence applications to gather, process, and analyze large volumes of data. However, managing ETL workloads can be challenging, with several potential roadblocks impacting performance, efficiency, and accuracy. In this blog post, we will explore what ETL workloads are, how they work, and the common challenges associated with managing them.

What Are ETL Workloads?

ETL is a data integration process that involves extracting data from one or more sources, transforming it into a standard format, and loading it into a target system. The process typically involves multiple steps, each of which has its own challenges and requirements.

- Extract: The first step in ETL involves extracting data from multiple sources, which may be structured or unstructured and may reside in on-premises systems or cloud-based platforms. Extracting data can be challenging, particularly when dealing with large volumes of data or when working with disparate data sources that use different formats, protocols, or access methods.

- Transform: The next step in ETL involves transforming data into a format that can be loaded into the target system. This typically involves data cleaning, normalization, aggregation, and other operations that are required to ensure the data’s consistency, accuracy, and completeness. Transforming data can be challenging, particularly when working with complex data structures, dependencies, or quality issues.

- Load: The final step in ETL involves loading the transformed data into a target system, such as a data warehouse, data lake, or database. Loading data can be challenging, particularly when dealing with large volumes of data, multiple data sources, or complex data models that require careful management of data relationships, schema definitions, and indexing.

What Is The Purpose Of ETL?

The purpose of ETL is to provide a single source of truth by bringing data together from disparate sources, ensuring high-quality data is used for business analysis and decision making. Through this process, businesses can gain a better understanding of the data they have and make more informed decisions. It also helps in data migration, cleansing, validation, and integration. ETL is an efficient way to move large amounts of data quickly and accurately, allowing businesses to gain a competitive advantage.

What Are The Challenges In ETL Workloads?

Managing ETL workloads can be challenging, with several potential roadblocks impacting performance, efficiency, and accuracy. Here are some of the common challenges associated with ETL workloads:

- Data quality issues: Data quality is a key challenge in ETL workloads, mainly when dealing with data from multiple sources. Data quality issues can include missing or incomplete data, inconsistent data formats, or data that is not relevant to the target system. Data quality issues can lead to inaccurate results and be time-consuming to resolve.

- Performance issues: ETL workloads can be resource-intensive, particularly when dealing with large volumes of data. Performance issues can include slow query response times, long data processing times, and high resource utilization, which can impact the overall efficiency of the ETL process.

- Complexity: ETL workloads involve multiple data sources, complex data models, and data dependencies. Managing ETL processes can require careful coordination between different teams and systems, and may involve multiple tools and technologies.

- Data security: Data security is a critical concern in ETL workloads, particularly when dealing with sensitive data. ETL processes must be designed to ensure that data is protected throughout the data lifecycle, including during extraction, transformation, and loading.

- Scalability: ETL workloads must be designed to scale, particularly as data volumes and complexity increase. Scalability can be challenging, mainly when working with legacy or cloud-based platforms with limited resources.

How Can nOps Help With ETL Workloads?

ETL workloads are an essential part of business intelligence and analytics. They enable organizations to extract, clean, consolidate and store data from multiple sources into a single, unified data warehouse. However, ETL workloads can be complex and resource-intensive. Here’s where nOps comes in!

nOps is a tool that helps users manage and monitor their cloud infrastructure. When it comes to ETL workloads, nOps can help in a couple of ways.

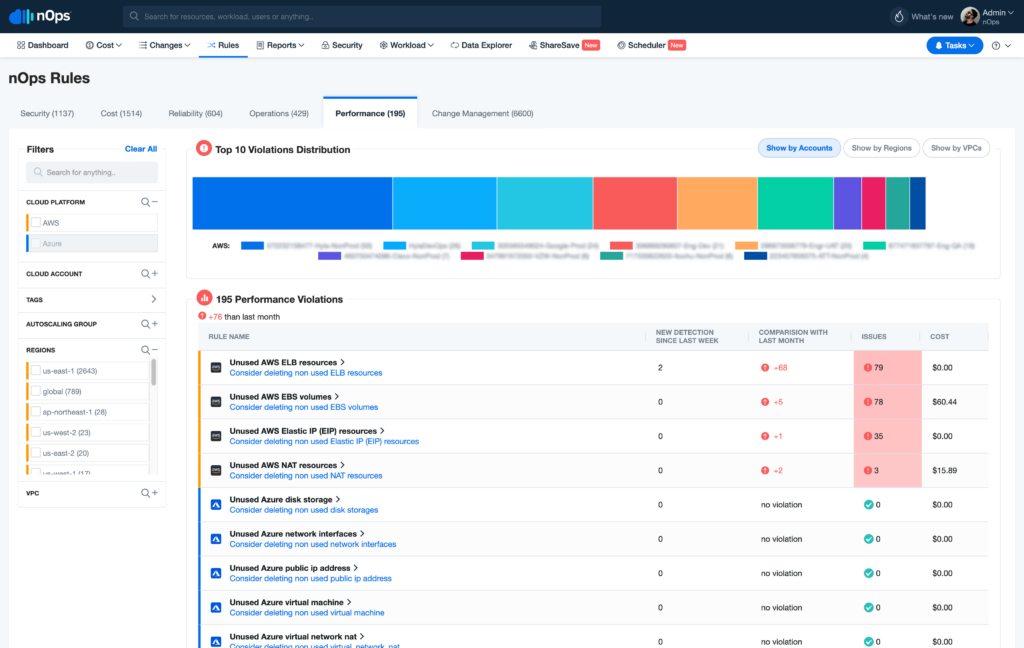

- Firstly, ETL workloads often involve processing and moving large amounts of data between different systems. nOps can help with this by providing insights into the performance and efficiency of your cloud resources. This can help you identify any bottlenecks or issues that might slow down your ETL processes, and optimize your infrastructure to ensure smooth and efficient data movement.

- Secondly, nOps can help with cost management for ETL workloads. ETL processes can be resource-intensive, which can lead to high cloud costs. nOps can help you track and manage your cloud spend, by providing Showbacks. Showbacks are reports visually representing how much your resources are costing you, broken down by usage type, so you can identify areas where you can optimize your costs.

- Overall, nOps is a tool that helps users manage their cloud infrastructure, including ETL workloads. It can help you identify performance issues, optimize your resources for efficient data movement, and help you manage your costs by providing Showbacks. With nOps, you don’t have to download your own CUR (Cost and Usage Reports) and face the complications since we handle that for you!

Your team focuses on innovation, while nOps runs optimization on auto-pilot to help you track, analyze and optimize accordingly!

Let us help you save cost! Sign up for nOps today.