Getting Engineers To Take Action: Bridging The Gap Between Engineering And Finance!

Engineers have long been trained to be customer obsessed. We define SLAs as an expression of our commitment to customers in terms of availability, performance, functionality, and security. In a pre-cloud world, controlling the cost of the services that we develop and run was a shared responsibility of IT, Finance, and procurement teams — we planned for capacity and informed these teams about the resource requirements to meet load projections and perhaps balancing in advance based on budget feedback. In the data center era, no one complained about resource consumption in a shared environment until you started to run short on capacity.

Today, engineers have a more direct impact on cloud spend, but incentives haven’t changed much. We’re still rewarded based on how many 9’s we achieve and how quickly we deliver innovation — but the impact of those decisions is often not evident until the bill comes. Cloud economics have become punitive for engineers — we’re told to cut, often with little ability to differentiate or allocate cost from one feature or service to the next. Adding to the complexity, there may be many teams across an organization having differing requirements, cadences, and goals.

All of this leads to a tremendous amount of frustration across finance, executive, and product teams struggling to find a way to quantify and reign in expenses, and all asking the same question – Why is it so challenging to take engineers to act?

A Cultural Shift

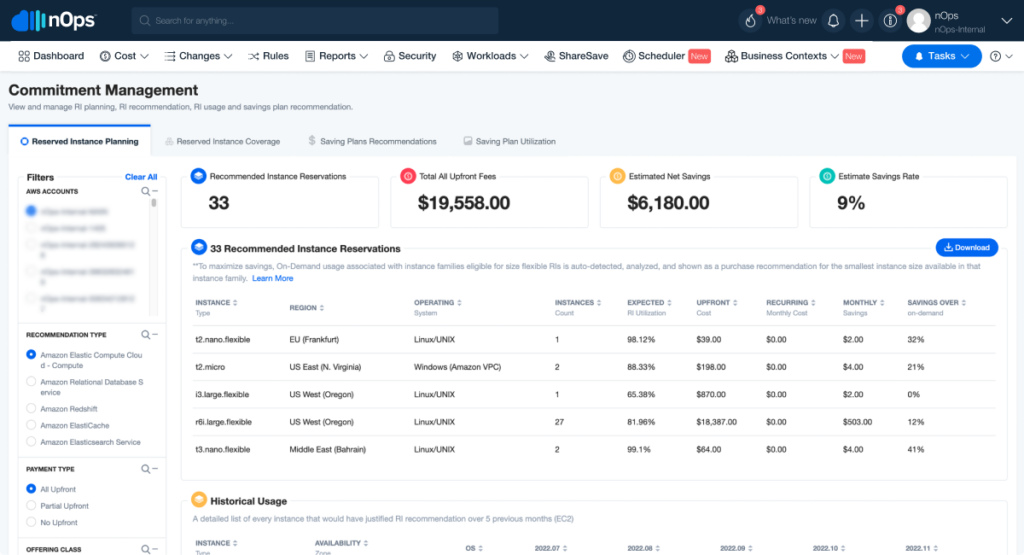

FinOps proposes a cultural shift in the way that organizations realize the promise of the public cloud – a truly elastic, consumption-based model. The FinOps framework proposes that there are two levers that can be pulled to control cost: pay less and use less. At nOps, we remove all of the complexity of AWS savings tools so that engineers can focus on innovation and the complicated piece of the equation – applying the principles of unit economics and taking advantage of the elastic nature of the cloud. In this series, we will discuss our journey to understand why engineers don’t or otherwise seem unable to act on savings opportunities.

The FinOps foundation proposes three phases in the journey to FinOps maturity – Inform, Optimize, and Operate. When we set out to truly allocate every penny of our cloud spend, we didn’t only come out of the experience with an understanding of how our dollars were spent but also a better understanding of how to apply the right context for different personas and purposes on our team. This is how nOps Business Contexts was born and tested – in our own organization and as a joint effort across teams and a centralized function. We also learned more than we expected about how to prioritize, plan, and act on optimizations. None of this would have been possible if we didn’t have buy-in from the executive team to embrace FinOps as an organizational practice.

Context Is Everything!

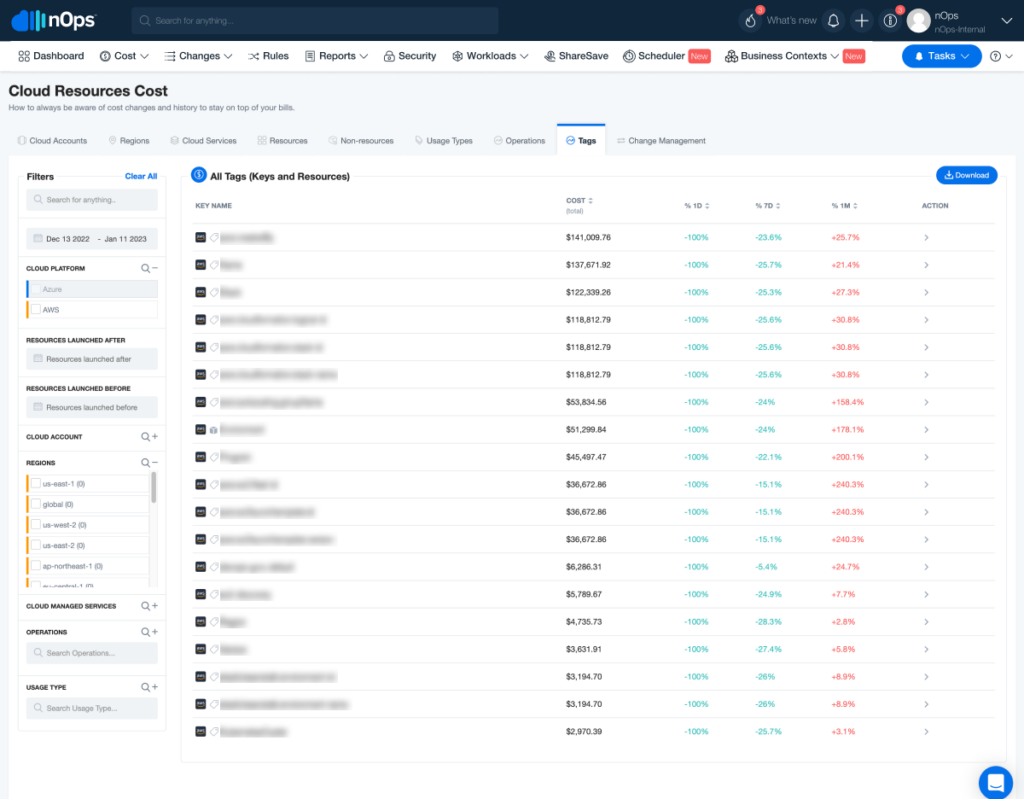

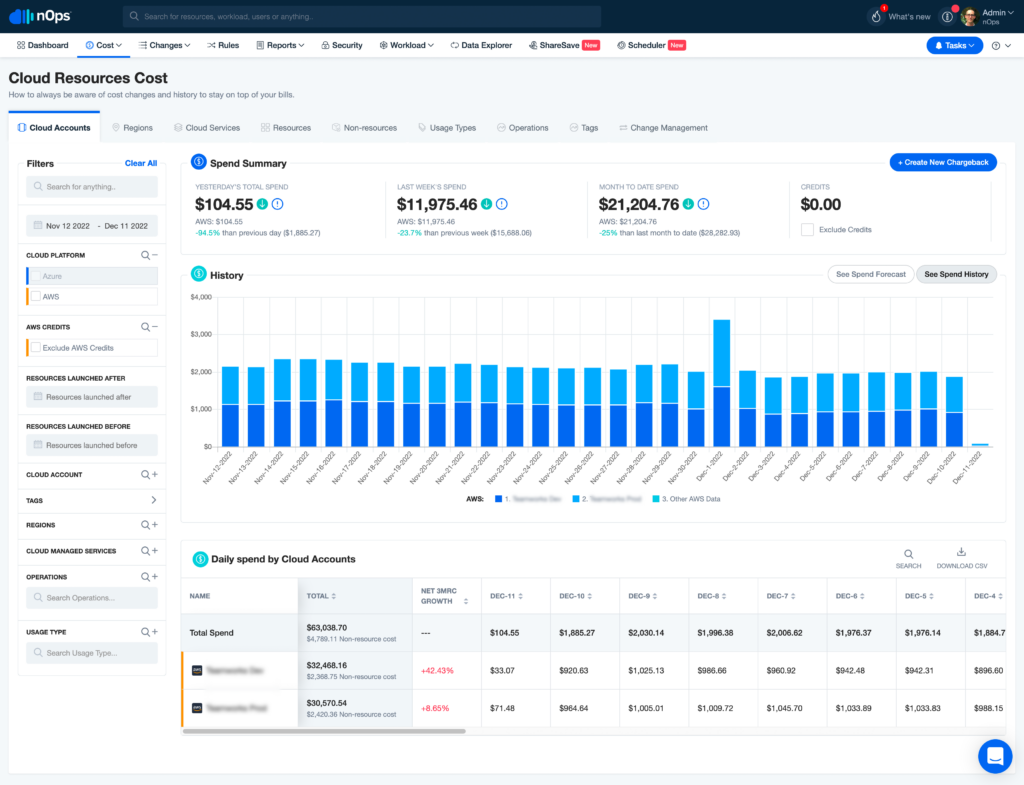

Finance thinks of resources in terms of Cost of Goods Sold (COGS), Research and Development, Marketing, and Sales. Engineering, on the other hand, might need to organize by environment, service, or even by different tools used in delivery. While tagging best practices might help us to get some of this visibility, constantly re-tagging resources is time-consuming and might not allow us to get visibility on non-resource related costs like network consumption, marketplace purchases, or expenses that need to be categorized differently for different views.

nOps Business Contexts allows us to create Showbacks for an unlimited number of views and gives us the ability to:

- Combine tags to account for different views

- Create showback values when no tag exists

- Create and combine allocation rules based on account, service, operations, and usage types

- Apply business logic to distribute expenses across teams, services, or cost centers

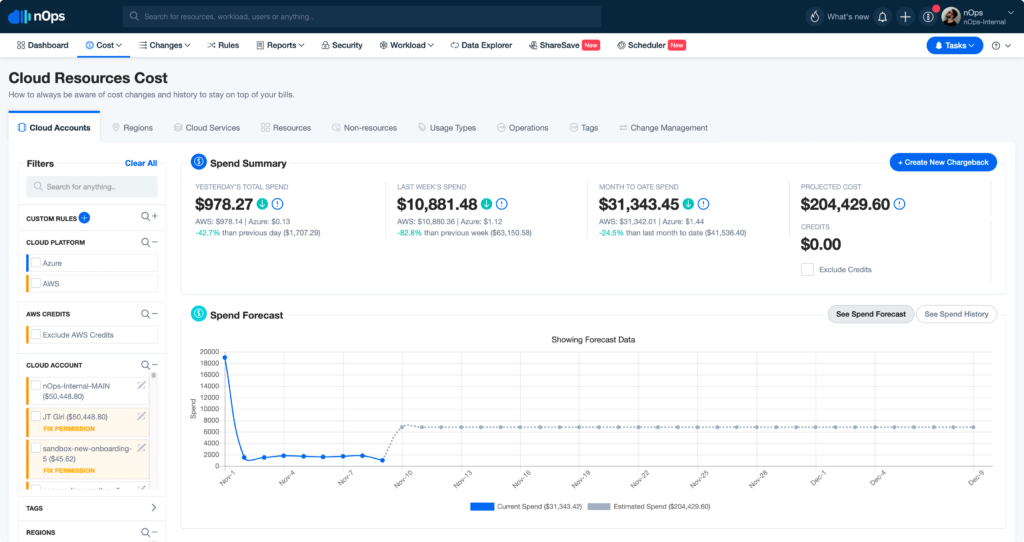

Our Cloud Resource Cost capability allows us to view, track, and report on showbacks from any dimension and at any time resolution — meaning that any team can quickly see how the showback category they are responsible for contributes to cloud resource cost and scores in relation to other groups.

What did the team learn in this process?

Viewing spend by AWS service can be deceiving: AWS offers hundreds of services that can be billed across thousands of dimensions. When we peel back the layers, we might see that the EC2 spend is too high but not realize that there are bigger opportunities in network configuration due to NAT Gateway expenses than in other, more disruptive, and complex optimization tasks.

Allocating our EKS expenses is possible and adds real value: Creating views that visualize expenses by node groups and allocating shared environments based on consumption helped us prioritize our spend reduction more effectively. Effectively calculating the cost of different services running in EKS has helped us to prioritize adopting newer services like AWS Batch running on Fargate for long-running batch jobs that are hard to scale.

Tagging can hide problems as easily as it can solve them: We had thousands of dollars worth of database snapshots with an improperly applied tag that would have never surfaced if we had not gone through the process of building contexts meaningful to the engineering team. It wasn’t until we had the ability to apply filters to those resources that we realized that our database snapshots were not configured correctly, creating thousands of dollars in storage expenses each month.

With proper service tracking, engineers can justify investing in new technologies: With our service-based showback, we were able to compare and contrast the growing cost of OpenSearch with Apache Druid, which was more suitable for the size and type of queries that we are running. Seeing the Druid costs instantly appear has helped us to prioritize migration work and will soon result in thousands in cost reductions as our OpenSearch reservations expire.

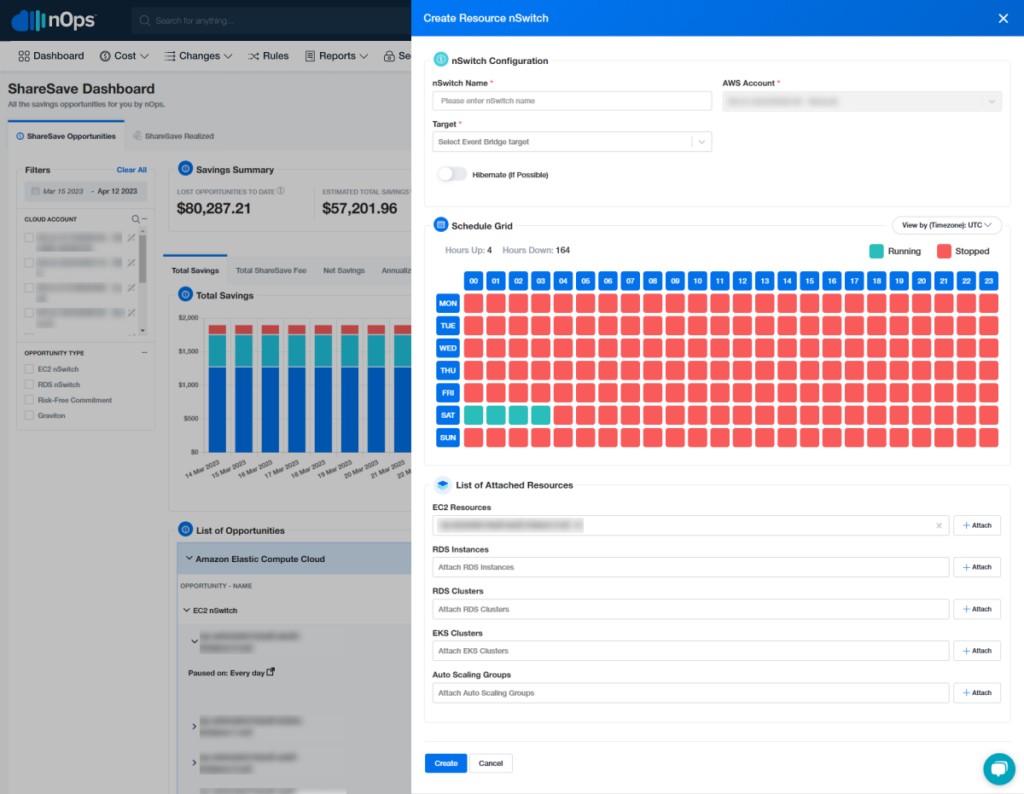

Priorities will change as part of the journey: Although conventional wisdom may tell us that our biggest cost savings opportunities are in optimizing large production environments, as you begin to quantify and justify these expenses in terms of unit economics, your attention may shift to lesser targets like QA and dev environments. For us, we realized that some of our quickest and most meaningful wins were going to be gained by scheduling our laboratory environments during off hours — and contributing to 20% savings on our overall EC2 and RDS bills.

Unit economics empower our teams to engineer more effectively: As our FinOps maturity has grown, so has our ability to apply unit economics on a daily basis. When we approach a new problem or feature, the team has built the experience and toolset to quickly analyze the impact of the new feature. In our case, we’re able to understand the cost of ingesting billing data for a customer of any size on a daily basis and also to prioritize optimization around that process to avoid any surprises.

Better collaboration leads to more efficient use of resources: FinOps improved collaboration between our engineering and finance teams and encouraged them to work together to optimize cloud spending and reduce waste. By establishing shared goals and metrics, both teams now work towards a common objective of reducing costs while maintaining the quality of service. This collaboration can lead to more efficient use of resources and cost savings for the company.

Cloud Vendor Negotiations: FinOps encourages our finance and engineering teams to collaborate during cloud vendor negotiations. This helps us ensure we get the best deal possible from the cloud vendor. By working together, finance and engineering teams can negotiate contracts that are financially efficient and technically effective.

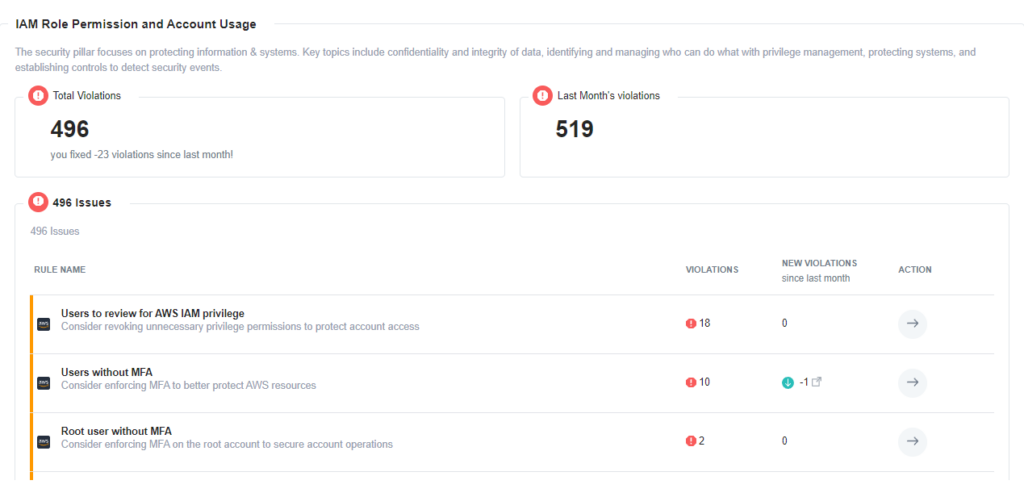

Reducing the risk of non-compliance: FinOps help us ensure that cloud resources are being used securely and compliantly. This is important as it reduces the risk of non-compliance and ensures that cloud resources are being used efficiently. For example, our engineering team can work with finance to identify areas where cloud usage can be optimized to comply with regulations such as GDPR or HIPAA.

Improved Compliance Reporting: FinOps helped us generate regular reports on AWS resource usage and costs. These reports could be used to demonstrate compliance with industry requirements. By providing detailed reports on how resources are being used and who owns them, organizations can provide evidence of compliance and respond to audit requests more quickly and efficiently.

Strong Governance and Control Mechanisms: FinOps helped us implement governance and control mechanisms to ensure that AWS resources are used in compliance with regulatory requirements. These included implementing access controls, monitoring user activity, and enforcing security policies. By implementing strong governance and control mechanisms, organizations can reduce the risk of non-compliance and improve their compliance posture.

The first step in solving anything is admitting that there is a problem!

Engineers like to solve problems, but we must realize that their inability to take action isn’t because they don’t want to. If we don’t define, categorize, and attack the problem as an organizational practice with accountability at the user level, the status quo remains in place. By implementing FinOps practices, engineers can gain visibility into how their systems are performing from a financial perspective. They can identify areas where performance is lacking and take action to optimize costs without sacrificing performance.o will remain: the engineering team will be treated punitively and continue to treat FinOps as something we only worry about when alarm bells ring from the highest levels.

nOps gives engineering and finance teams a common platform that they can use to describe and solve problems together. At nOps, we find ourselves constantly sharing the outcome of our work so that teams can identify a specific feature, group of resources, or service that needs attention.

Of course, taking the first step is a requirement for any journey to begin. The true magic of nOps is in meeting engineers with the tools and frameworks that they use every day to make optimization easy. In the next edition, we’ll talk about our journey in paying less, using less and controlling our cloud spend by over 50% using the platform’s fully integrated, fully automated capabilities.

Let us help you save! Sign up for nOps today.