- Blog

- EKS Optimization

- Efficient Resource Utilization and Rightsizing in Kubernetes | Part 1: Intro to Container Rightsizing

Efficient Resource Utilization and Rightsizing in Kubernetes | Part 1: Intro to Container Rightsizing

Efficient resource utilization in a Kubernetes cluster is vital for reducing costs, improving performance and scalability, minimizing resource contention, enhancing operational efficiency, supporting sustainable practices, leveraging resource optimization tools, and ensuring reliability.

In this blog series, we will take a structured approach to optimizing Kubernetes, starting from the basics and moving to more complex strategies. The series comprises five parts, each focusing on a key aspect of resource optimization in Kubernetes:

- Introduction to Container Rightsizing: the fundamentals, best practices, and strategies for adjusting resource requests and limits to match actual usage patterns, particularly in Amazon EKS.

- Horizontal vs. Vertical Scaling: HPA, VPA & Beyond: a comparison of Horizontal Pod Autoscaler (HPA), Vertical Pod Autoscaler (VPA), and how they intersect with other advanced scaling techniques.

- Bin Packing & Beyond: the changing landscape of efficiently packing and sizing your EKS nodes.

- Deep Dive into VPA Modes: a detailed look at the various modes of VPA, explaining their use cases and configurations

- Effective Node Utilization and Reconsideration for optimal performance at the most granular level

In Part 1, we will focus on Container Rightsizing in EKS, with steps, examples, and best practices.

What is Container Rightsizing?

Container rightsizing involves adjusting the resource requests and limits for containers to match their actual usage patterns. This ensures that each container gets the right amount of CPU and memory, leading to more efficient resource utilization, improved performance, and cost savings.

In Kubernetes, proper container rightsizing is crucial to avoid under-provisioning and over-provisioning resources.

Let’s briefly discuss the problems this can cause before diving into the practical information of effective container rightsizing and the tools you can use to make it easier.

And if you’re looking to put the optimization of your EKS workloads on autopilot, consider booking a quick call with one of our AWS experts).

Resource Requests and Limits

Specifying how much memory and CPU each deployment needs is crucial for efficient resource utilization, performance and availability. Here are some potential problems when this is not done correctly:

Issues from Under-provisioning (Not Allocating Enough Resources)

Under-provisioning will cause availability and performance issues in your application, such as:

Pod Evictions | Kubernetes may evict pods that exceed their resource limits to protect other workloads on the same node. This can lead to application downtime or degraded performance. |

Throttling | If a pod exceeds its CPU limits, Kubernetes will throttle the CPU usage, slowing down the application and potentially causing timeouts or slow responses. |

Out of Memory (OOM) Kills | When a pod exceeds its memory limit, the kernel’s OOM killer will terminate the pod, leading to application crashes and possible data loss. |

Performance Degradation, Latency and Timeouts | Insufficient resources can cause performance issues, such as slow response times, increased latency, and reduced throughput. |

Issues from Over-provisioning (Allocating Too Many Resources)

Similarly, over-provisioning is also problematic:

Wasted Resources & Higher Costs | Allocating more CPU and memory than needed can lead to underutilization, where resources are idle but still being paid for, leading to increased costs without performance benefits. |

Resource Contention | If many pods over-request resources, it can cause nodes to appear full, triggering unnecessary scaling activities and increasing infrastructure costs. |

Inefficient Cluster Utilization | Over-provisioning can lead to inefficient utilization of the cluster, where fewer pods fit on each node, causing more nodes to be used than necessary. |

Additionally, incorrect resource allocation for one application can adversely affect other applications running on the same cluster, leading to cascading performance issues. For example, if one application uses too much memory and another application on the same node does not have its memory requests properly configured, the first application might cause availability and performance issues for the second one. However, if the memory requests were correctly specified, Kubernetes would place the second application on a node with sufficient available memory. The task of placing pods on the correct nodes is known as bin-packing.

How to Configure Memory and CPU Requests and Limits for a Deployment in Kubernetes

Having outlined the importance of effective container rightsizing, let’s dive into the details of how to configure memory and CPU requests and limits for a deployment in Kubernetes. To do this, you need to define these settings in the resources section of the container specification in your deployment YAML file. Here’s a step-by-step guide:

- Create or Edit a Deployment YAML File: Start by creating a new deployment YAML file or editing an existing one.

- Define the resources Section: Within the container specification, add the resources section to set the requests and limits for CPU and memory.

Here is an example of how to configure memory and CPU requests and limits for a deployment in Kubernetes:

apiVersion: apps/v1 kind: Deployment metadata: name: example-deployment spec: replicas: 3 selector: matchLabels: app: example template: metadata: labels: app: example spec: containers: - name: example-container image: example-image:latest resources: requests: memory: "512Mi" cpu: "500m" limits: memory: "1Gi" cpu: "1" In the above deployment YAML file, the resources section within the container specification is used to define the CPU and memory requests and limits for a container:

- requests: These are the minimum amount of resources that Kubernetes guarantees for the container.

- memory: “512Mi”: The container requests 512 MiB of memory. This is the minimum amount of memory that will be reserved for the container.

- cpu: “500m”: The container requests 500 millicores (0.5 cores) of CPU. This is the minimum amount of CPU that will be reserved for the container.

- limits: These are the maximum amount of resources that the container is allowed to use.

- memory: “1Gi”: The container is limited to using 1 GiB of memory. This is the maximum amount of memory the container can use.

- cpu: “1”: The container is limited to using 1 core of CPU. This is the maximum amount of CPU the container can use.

How to Figure Out the Correct Memory and CPU Requests and Limits for your Applications

To determine the correct memory and CPU requests and limits for your applications running on Kubernetes, you can use a combination of tools and techniques to monitor, analyze, and adjust resource usage. Here is a process you can follow:

- Deploy your application with initial guesses for resource requests and limits.

- Monitor the application for a set period using tools such as Prometheus and Grafana.

- Review the metrics to find average and peak usage.

- Adjust the resource requests to be slightly above the average usage.

- Set the resource limits to accommodate the peak usage without causing resource contention.

- Iterate on this process regularly or when significant changes are made to the application.

Container Rightsizing Solutions

To implement a Container Rightsizing process and strategy similar to the one proposed above, we need to consider the tools and solutions available in the market. As containerized applications grow in complexity and scale, more advanced solutions are required to ensure the correct resources are allocated to containers to match their actual usage needs for both performance and cost efficiency. There are two main types of container rightsizing tools and solutions: Recommendation-Based Rightsizing and Fully Automated Rightsizing.

Recommendation-Based Rightsizing

Recommendation-based rightsizing tools analyze resource usage and provide suggestions for optimal resource allocation. These recommendations are based on historical usage data and performance metrics.

The advantage of recommendation-based rightsizing is control and flexibility. Administrators have full control over resource allocation decisions. You can review and make customized adjustments before implementation, ensuring recommendations align with the specific needs of your application as well as broader infrastructure strategies and policies. This review reduces the risk of unexpected changes or disruptions to application performance.

On the other hand, recommendations-based rightsizing is more time-consuming and labor-intensive, requiring continuous monitoring and manual adjustments. Changes are not implemented in real-time, potentially leading to temporary periods of inefficiency or performance issues.

Popular tools for recommendations-based rightsizing:

Kube-ops-view | Provides a graphical view of Kubernetes cluster usage and recommendations |

Goldilocks | Offers recommendations for Kubernetes resource requests and limits based on historical data |

Datadog | Provides monitoring and analytics with rightsizing recommendations |

Fully Automated Rightsizing

Fully automated rightsizing tools not only provide recommendations but also automatically adjust resource allocations in real-time. These tools use historical data and continuous monitoring to dynamically optimize resource usage without manual intervention.

The advantages of these tools include enhanced efficiency, as automatic adjustments are implemented promptly and in real time. Additionally, these tools reduce manual effort by automating routine resource management, freeing up administrators to focus on other tasks.

On the other hand, fully automated rightsizing comes with certain risks. Real-time changes can sometimes lead to unexpected performance issues if the algorithms do not accurately predict usage patterns. It offers less control, and automated adjustments may not always align with specific application requirements or broader infrastructure strategies. These automated systems can also be more complex to implement and manage.

Popular fully automated rightsizing solutions:

Provides real-time cost visibility and automated resource rightsizing for Kubernetes clusters | |

Vertical Pod Autoscaler | Automatically adjusts the CPU and memory resources of running pods in a Kubernetes cluster based on actual usage, continuously optimizing resource allocation without manual intervention |

Different Kinds of Autoscaling in Kubernetes

Fully Automated Container Rightsizing is one type of autoscaling in Kubernetes. However, it’s important to understand the other types of autoscaling available to determine which ones are essential to implement in your cluster.

In Kubernetes, autoscaling is a mechanism to automatically adjust the capacity of your cluster and applications based on the current demand and resource usage. Configuring auto scaling is crucial for efficient resource utilization, performance and availability of your applications. Kubernetes provides several types of autoscaling to help ensure applications run efficiently and reliably. Here are the primary types of autoscaling available in Kubernetes:

- Horizontal Pod Autoscaler (HPA): Adjusts the number of pod replicas in a deployment, replica set, or stateful set based on observed CPU utilization (or other select metrics) to match the desired target.

- Cluster Autoscaler or Karpenter: Adjusts the number of nodes in a Kubernetes cluster based on the pending pods that cannot be scheduled due to insufficient resources.

The Ultimate Guide to Karpenter

- Vertical Pod Autoscaler (VPA): Adjusts the resource limits and requests (CPU and memory) for containers in pods to match the observed usage. This is also known as Container Rightsizing.

When combined, these three autoscaling tools create a robust and efficient system for resource management in a Kubernetes cluster. Each tool addresses different aspects of scaling, ensuring that applications remain performant, cost-effective, and reliable. However, these tools can also conflict; for a full explanation of each tool and how it interacts with others, check out part 2 of this series, Horizontal vs. Vertical Scaling: HPA, VPA & Beyond.

Vertical Pod Autoscaler (VPA) for Container Rightsizing

Vertical Pod Autoscaler (VPA) is a popular tool to automate container rightsizing. As a native Kubernetes tool, VPA integrates seamlessly with Kubernetes clusters, making it a convenient choice for users who prefer native solutions over third-party tools. Ideally, when VPA is configured properly, we don’t need to follow the manual process detailed above for figuring out the correct CPU and Memory for our applications and perform manual adjustments. VPA works well for stateless microservices by adjusting resources based on demand.

VPA Modes

VPA operates in four modes:

- Off: In this mode, VPA only provides recommendations but does not make any changes to the running pods. This mode is useful when you want to understand the resource requirements of your application without impacting the running environment.

- Auto: VPA automatically adjusts the resource requests of the pods based on recommendations. This mode is useful when you’ve already confirmed that VPA recommendations work well in your environment.

- Initial: VPA sets the resource requests only when a pod is created, without making adjustments afterward. This mode is suitable for workloads with predictable resource usage that don’t need dynamic adjustments.

- Recreate: VPA assigns resource requests on pod creation time and updates them on existing pods by evicting and recreating them. This mode is useful for workloads where it is acceptable to have temporary downtime due to pod recreation, such as stateless applications that can handle disruption.

How VPA Rightsizes Resources

VPA uses historical data to adjust the resource requests of your containers. It monitors CPU and memory usage and makes recommendations or automatic adjustments to ensure that your applications are neither over-provisioned nor under-provisioned. This helps in optimizing the utilization of resources and reducing costs.

Challenges and Limitations

While VPA can be highly effective for stateless microservices, it has several challenges and limitations:

- Suitability for Different Workloads: It may not be suitable for batch jobs or stateful applications. Batch jobs have specific resource requirements that might not align with VPA adjustments, and stateful applications often have constraints that make dynamic resource changes more complex.

- Handling of Resource Limits: VPA requires careful management of upper limits to avoid scenarios where resource requests exceed the available capacity on the node. For example, if VPA increases the memory request of a pod beyond what the node can provide, it could lead to issues such as pod evictions or failures to schedule.

- Cluster State Awareness: VPA does not account for the entire cluster state, including node availability or overall resource limits. This lack of awareness can result in recommendations that are not feasible given the current cluster setup, leading to potential disruptions in service.

- Interaction with Horizontal Pod Autoscaler (HPA): When VPA and HPA are used together, there can be complex interactions. HPA adjusts the number of pod replicas based on metrics like CPU and memory usage, while VPA adjusts the resource requests of the pods themselves. Balancing these two can be challenging and might require additional configuration to ensure they work harmoniously.

Given these challenges, VPA might not be the right choice in many cases, and many organizations still need to iterate a manual container rightsizing process similar to the one proposed above.

What’s Next?

In this blog post, you learned about the importance of resource requests and limits, and the potential issues of incorrect allocation. You were also introduced to tools and techniques for determining the correct resources for your applications. Proper container rightsizing ensures that your Kubernetes cluster runs efficiently, minimizing costs and maximizing performance.

In the next installment of our series, Part 2: Horizontal vs. Vertical Scaling: HPA, VPA & Beyond, we’ll dive into the differences between horizontal and vertical scaling. We’ll compare the Horizontal Pod Autoscaler (HPA) and Vertical Pod Autoscaler (VPA) and discuss advanced scaling techniques to further optimize your Kubernetes environment.

And stay tuned for Part 3, where we’ll delve into Bin Packing strategies to achieve efficient resource allocation within your cluster.

By following this series, you’ll gain a comprehensive understanding of resource optimization in Kubernetes, helping you to build robust, cost-effective, and high-performing applications.

Kubernetes cost optimization is better with nOps

Are you already running on EKS and looking to automate your workloads at the lowest costs and highest reliability?

nOps Compute Copilot helps companies automatically optimize any compute-based workload. It intelligently provisions all of your compute, integrating with your AWS-native Karpenter or Cluster Autoscaler to automatically select the best blend of SP, RI and Spot. Here are a few of the benefits:

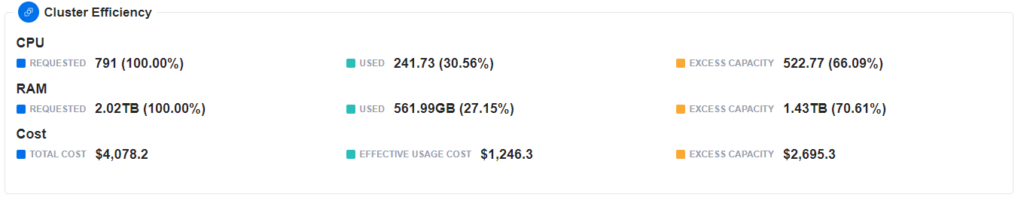

- See cluster efficiency at a glance: the nOps dashboard provides cluster efficiency metrics allowing you to visualize CPU and RAM requests and usage, excess capacity, and associated costs — making it easy to identify the need to container rightsize.

- Fully automated container rightsizing (coming soon): nOps monitors your clusters and automates the full container rightsizing process on your behalf, so that you can save on autopilot — freeing your time to focus on building and innovating.

- Save effortlessly with Spot: Engineered to consider the most diverse variety of instance families suited to your workload, Copilot continually moves your workloads onto the safest, most cost-effective instances available for easy and reliable Spot savings.

- 100% Commitment Utilization Guarantee: Compute Copilot works across your AWS infrastructure to fully utilize all of your commitments, and we provide credits for any unused commitments.

Our mission is to make it faster and easier for engineers to optimize, so they can focus on building and innovating.

nOps manages over $1.5 billion in AWS spend and was recently ranked #1 in G2’s cloud cost management category. Book a demo to find out how to save in just 10 minutes.

Last Updated: May 21, 2025, EKS Optimization

Last Updated: May 21, 2025, EKS Optimization