- Blog

- AWS Pricing and Services

- The Ultimate Guide to AWS S3 Pricing in 2026

The Ultimate Guide to AWS S3 Pricing in 2026

Interested in understanding how Amazon calculates your S3 Storage Costs? If you’re looking for ways to monitor, optimize and reduce your S3 spend, we’ve got you covered with this complete guide.

We will discuss all the elements that contribute to your bill in detail, explain how to compute your S3 storage costs, and discuss some strategies and tips for optimizing your spending.

Here’s a preview of this guide’s structure:

- What is Amazon S3, and why is S3 pricing so complicated?

- How does S3 pricing work, simplified

- A complete breakdown of Amazon S3 pricing by component

- Best practices for reducing your S3 costs

- Tools to help simplify your S3 bill

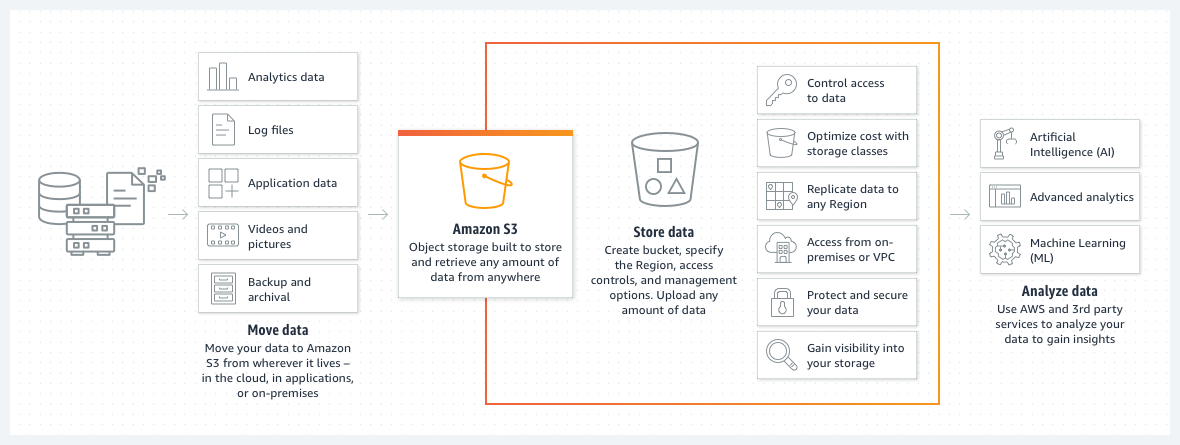

What is Amazon S3?

Amazon S3 (Simple Storage Service) offers highly scalable object storage with pricing that reflects its variety and flexibility. S3 is widely used for diverse purposes like data lakes, websites, mobile applications, backup and restore, archive, enterprise applications, IoT devices, and big data analytics.

Why is AWS S3 pricing so complicated?

With multiple storage classes designed for different access frequencies and data lifecycles—from frequently accessed data in S3 Standard to rarely accessed in S3 Glacier—pricing can get complex. Knowing the right pricing plan to choose is one thing; there are also extra charges based on factors like storage volume, data requests, retrieval rates, and management features, all of which vary by region and specific usage. Additional costs stem from add-on features and management services like S3 Replication and S3 Object Lock.

To optimize your S3 spending, it’s important to get a solid understanding of all of these components — we’ll make it as easy and straightforward as possible in this detailed guide.

Components of Amazon S3 Pricing

When you store data in Amazon S3, you’re billed based on a variety of usage dimensions. Here’s a breakdown of what you’re actually paying for:

- Storage & Storage Class

You’re charged for the total volume of data stored, and the price depends heavily on the storage class you select — from S3 Standard to Glacier Deep Archive. Each class balances cost against access frequency and retrieval speed. - Data Transfer

Inbound data transfer is generally free, but outbound data (e.g., to the internet or other AWS regions) incurs charges. Inter-region and cross-AZ transfers can also affect your bill. - Tables

If you’re using S3 with features like Amazon S3 Inventory or S3 Select, you may be generating metadata tables or running queries that involve cost — especially with large-scale analytics integrations. - Security Access & Control

Features like AWS Key Management Service (KMS) for encryption or logging access with AWS CloudTrail may come with additional charges, depending on how they’re configured. - Replication

If you’re replicating data across regions (S3 Cross-Region Replication), you’ll be charged for storage in the destination region, plus any associated data transfer costs. - Requests & Data Retrievals

Every PUT, GET, LIST, COPY, or lifecycle transition request incurs a cost. Charges vary by request type and frequency of access, especially with infrequent access or archival classes. - Transform and Querying

Features like S3 Object Lambda or S3 Select allow you to run filtering or transformation logic on your data. These operations are billed based on the volume of data scanned or processed. - Bucket Location or Data Transfer Destination

Where your S3 bucket is located matters — costs differ by AWS region. Additionally, transferring data between buckets in different regions can generate inter-region transfer charges. - Management and Insights

Tools like S3 Storage Lens, object tagging, and lifecycle policies offer visibility and automation but may result in additional costs if you enable advanced metrics or granular reporting.

How does Amazon S3 pricing work?

While taking into account the total S3 Storage costs, there are six components that matter the most:

- Storage: the amount of data you store (in GB)

- Requests and data retrievals: operations that you execute to retrieve data, like GET, PUT, DELETE, etc.

- Data transfer modes: how and where you transfer data

- Management and analytics: management and analytics features and tools

- Replication: copying data to multiple storage locations for increased availability and durability

- S3 Object Lambda: data transformation and processing through S3 Object Lambda

These six components are the main factors determining your S3 costs. Amazon S3 uses a pay-as-you-go pricing model, without any upfront payment or commitment required. S3’s pricing is usage-based, so you pay for the resource that you’ve used.

It’s worth noting that AWS offers a free tier to new AWS customers, involving 5GB of Amazon S3 storage in the S3 Standard storage class; 20,000 GET Requests; 2,000 PUT, COPY, POST, or LIST Requests; and 100 GB of Data Transfer Out each month.

S3 Storage Classes Explained

Storage is the most significant component of S3 pricing, contributing the most towards total cost. Different S3 storage classes are suitable for various use cases. The frequency of storage access, speed of storage access, and required amount of redundancy are the main deciding factors in determining which storage class to choose.

Let’s briefly summarize before breaking down each class in detail.

Amazon S3 Storage Classes: A Side-by-Side Comparison

| S3 Standard | S3 Intelligent-Tiering* | S3 Express One Zone** | S3 Standard-IA | S3 One Zone-IA** | S3 Glacier Instant Retrieval | S3 Glacier Flexible Retrieval*** | S3 Glacier Deep Archive*** | |

| Use cases | General purpose storage for frequently accessed data | Automatic cost savings for data with unknown or changing access patterns | High performance storage for your most frequently accessed data | Infrequently accessed data that needs millisecond access | Re-creatable infrequently accessed data | Long-lived data that is accessed a few times per year with instant retrievals | Backup and archive data that is rarely accessed and low cost | Archive data that is very rarely accessed and very low cost |

| First byte latency | milliseconds | milliseconds | single-digit milliseconds | milliseconds | milliseconds | milliseconds | minutes or hours | hours |

| Durability | Amazon S3 provides the most durable storage in the cloud. Based on its unique architecture, S3 is designed to exceed 99.999999999% (11 nines) data durability. Additionally, S3 stores data redundantly across a minimum of 3 Availability Zones by default, providing built-in resilience against widespread disaster. Customers can store data in a single AZ to minimize storage cost or latency, in multiple AZs for resilience against the permanent loss of an entire data center, or in multiple AWS Regions to meet geographic resilience requirements. | |||||||

| Designed for availability | 99.99% | 99.9% | 99.95% | 99.9% | 99.5% | 99.9% | 99.99% | 99.99% |

| Availability SLA | 99.9% | 99% | 99.9% | 99% | 99% | 99% | 99.9% | 99.9% |

| Availability Zones | ≥3 | ≥3 | 1 | ≥3 | 1 | ≥3 | ≥3 | ≥3 |

| Minimum storage duration charge | N/A | N/A | 1 hour | 30 days | 30 days | 90 days | 90 days | 180 days |

| Retrieval charge | N/A | N/A | N/A | per GB retrieved | per GB retrieved | per GB retrieved | per GB retrieved | per GB retrieved |

| Lifecycle transitions | Yes | Yes | No | Yes | Yes | Yes | Yes | Yes |

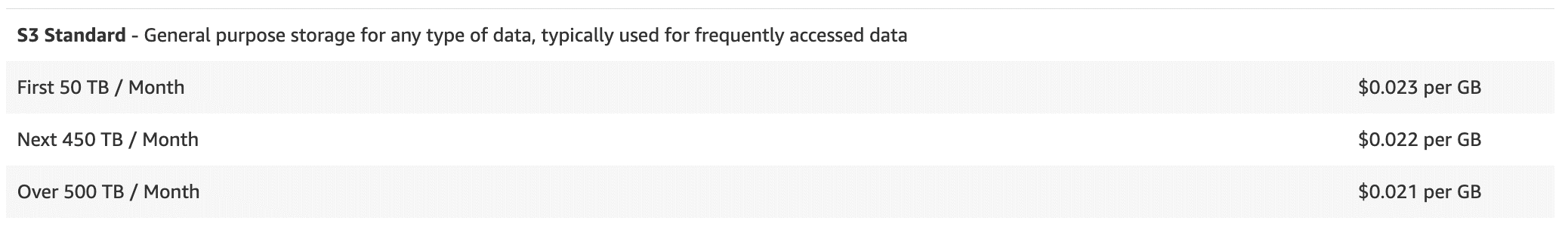

S3 Standard Pricing

This storage class is designed for frequently accessed data and provides high durability, performance and availability (data is stored in a minimum of three Availability Zones). S3 Standard is best suited for general-purpose storage for a range of use cases requiring frequent data access, such as websites, content distribution or data lake (in fact, more than 93% of S3 objects are stored in this class).

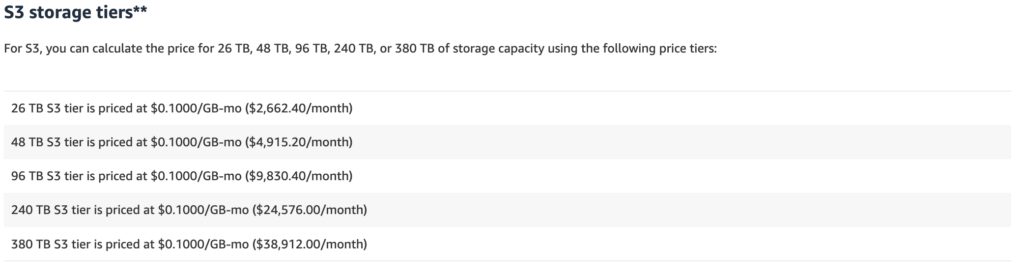

Costs are higher for S3 Standard than for other S3 storage classes. Pricing is tiered; you pay $0.023 per GB per month for the first 50 TB per month. The next tier is $0.022 per GB, then storage above 500 TB per month is priced at $0.021.

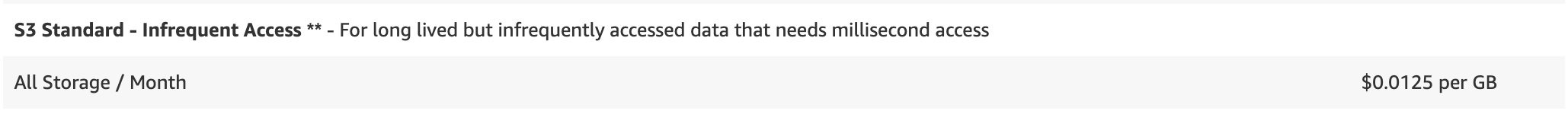

S3 Standard – Infrequent Access (IA) Storage:

This storage class offers a lower storage cost option for data that is less frequently accessed, but still requires rapid access when needed. It is similar to Amazon S3 Standard, with a 40–46% lower storage price but fees for data retrieval. It is ideal for long-term storage, disaster recovery, and backups.

S3 Standard — Infrequent Access tier is priced starting $.0125 per GB.

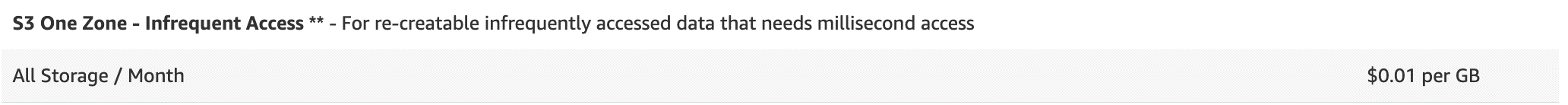

S3 One Zone – Infrequent Access Tier:

Typically, the data is replicated across multiple storage zones to ensure durability and high availability. However, with S3 One Zone, data is kept in a single AWS Availability Zone. This makes it appropriate for less frequently accessed data that needs quick retrieval. However, it is not intended to be resilient to the actual loss of an AZ.

Thus, if regional redundancy is not something you require (for example, if you’re storing secondary backup copies or other data that can be recreated), you can benefit from rates that are 20% less expensive than S3 Standard-Infrequent Access (starting at $.01 per GB per month).

S3 Intelligent-Tiering

For automated cost optimization, S3 Intelligent Tiering uses built-in monitoring and automated features to shift data between such a frequent-access tier (FA) and an infrequent-access tier (IA). The use of S3 Intelligent-Tiering means that you will not be charged for FA storage for data that isn’t frequently accessed; files kept in FA are charged at the S3 Standard rate, while those kept in Infrequent Access are discounted by 40–46%.

There is a monthly tracking and auto-tiering cost associated with S3 Intelligent Tiering, but there are no fees for data retrieval.

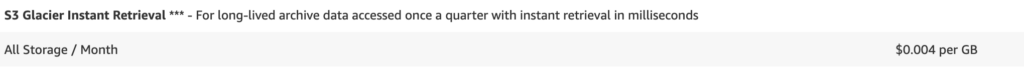

S3 Glacier Instant Retrieval

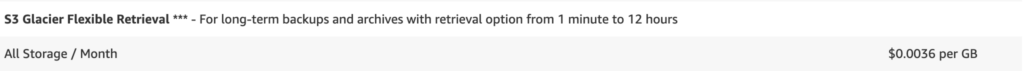

S3 Glacier Flexible Retrieval

Aimed at data archiving, this storage class provides extremely low-cost storage with retrieval times ranging from a few minutes to several hours (much slower than the other classes). This class is suitable for data only anticipated to be retrieved once or twice a year, not requiring instant access.

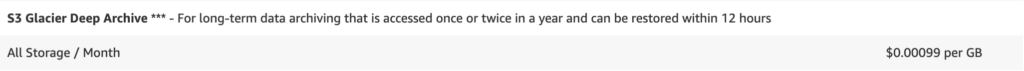

S3 Glacier Deep Archive

S3 on Outposts Storage Class

AWS Cloud Cost Allocation: The Complete Guide

Amazon S3 Request and Data Retrieval

AWS charges for the number of requests made to your Amazon S3 buckets, such as PUT, GET, COPY, and POST requests. (Data retrieval is a type of S3 request).

Each of these request accrues specific charges that add to overall S3 Storage costs based on your tier and request volume. For example, Standard Storage charges $0.005 per 1,000 requests for PUT, COPY, POST, or LIST, compared to $0.05 for S3 Glacier Deep Archive access tier meant for infrequent access.

AWS S3 Data Transfer Pricing

Transferring data out of Amazon S3 to the internet or to other AWS regions (inter region data transfer) incurs charges.

Transfers into Amazon S3 (ingress) are generally free, but egress (outbound) transfers over the free tier limit are charged per gigabyte. There are also additional charges if you want to accelerate your data transfer.

AWS S3 Management Features and Insights

AWS S3 management and analytics costs can increase due to functionalities such as S3 Inventory, S3 Storage Class Analysis, S3 Storage Lens, and S3 Object Tagging, each providing detailed insights and management capabilities that, while enhancing operational efficiency, add to the overall expense.

Exact costs depend on the particular service (for example, S3 Storage Lens bills the first 25B objects monitored monthly at $0.20, the next 75B at $0.16, and all objects beyond 100B at $0.12 per million objects).

AWS S3 Replication Costs

AWS S3 Replication involves duplicating S3 Storage data to another destination within the AWS ecosystem, increasing cloud usage costs. Typically, Amazon bills these replications as regular S3 usage, with costs based on the data transfer methods employed.

Same Region Replication (SRR) is generally the most cost-effective, incurring charges based on standard S3 Storage rates plus any associated data transfer fees from PUT requests. For Infrequent Access tiers, data retrieval charges are also added.

The total cost for SRR includes these charges plus the original storage costs. Conversely, Cross Region Replication (CRR) incurs additional fees for inter-region data transfers, potentially leading to higher overall expenses.

S3 Object Lambda:

AWS S3 Object Lambda integrates with your existing applications, allowing for the on-the-fly processing of S3 data using AWS Lambda functions. This AWS service modifies data retrieved from S3 Storage, transforming it for compatibility with applications that could not previously process it directly. Simply add your custom code, and S3 Object Lambda will handle the transformation and return the processed data to your application.

This service incurs a fee of $0.005 per GB of data returned.

Best Practices to Reduce your AWS S3 Costs

1. Use Lifecycle Policies

Amazon S3 Lifecycle policies automate data management by transitioning objects to more cost-effective storage classes or deleting them based on predefined rules. Using the S3 Management Console, you can set rules to move infrequently accessed data to S3 Standard-IA after 30 days and to S3 Glacier Flexible Retrieval after 90 days for rarely accessed data.

Additionally, setting expiration actions to delete outdated logs or incomplete multipart uploads and using tagging for data categorization can provide more precise control over how lifecycle rules apply to specific datasets.

2. Delete Unused Data

3. Compress Data Before You Send to S3

You can reduce Amazon S3 charges related to data storage and transfer by compressing data before uploading it. Compression reduces the volume of data, impacting both storage space and transfer costs.

Common compression algorithms include GZIP and BZIP2, which are ideal for text and offer good compression ratios. LZMA, although more processing-intensive, achieves higher compression rates. For binary data or rapid compression, LZ4 is recommended due to its fast speeds. Furthermore, utilizing file formats like Parquet, which supports different compression codecs, optimizes storage by facilitating efficient querying and storage of complex, columnar datasets.

4. Use S3 Select to retrieve only the data you need

When you use S3 Select, you can specify SQL-like statements to filter the data and return only the information that is relevant to your query. This means you can avoid downloading the entire file, process it on your application side, and then discard unnecessary data. By doing this, you reduce the data transfer and processing costs.

5. Choose the right AWS Region and Limit Data Transfers

Selecting the right AWS region for your S3 storage can have a significant impact on costs, especially when it comes to data transfer fees. Data stored in a region closer to your users or applications typically reduces latency and transfer costs, because AWS charges for data transferred out of an S3 region to another region or the internet. Check out this full guide to data transfer for practical tips on reducing your costs.

Tools for S3 cost visibility and management

One of the most important ways to reduce your S3 costs is with automated monitoring, analytics, and optimization tools — here are the platforms that can help.

AWS Pricing Calculator

The AWS Pricing Calculator is a useful tool for estimating and managing the costs of various AWS services, including S3 bucket pricing. Users can model their solutions before building them, explore pricing options for different scenarios, and create templates for recurrent use.

While the AWS Pricing Calculator is a great free tool, it isn’t always the most beginner-friendly option. It requires a certain amount of expertise and knowledge to appropriately fill out its highly technical data fields.

S3 Storage Lens

Amazon S3 Storage Lens is an analytics tool that enhances visibility and management of your S3 storage.

It offers a dashboard and metrics to assess operational and cost efficiencies. Key features include identifying costly data access patterns to optimize costs, redistributing data across storage classes to save money, and monitoring replication to avoid unnecessary redundancy costs. You can also set customizable metrics and alerts to manage and mitigate potential issues.

However, it’s worth noting that S3 isn’t free, with charges starting at $0.023 per GB.

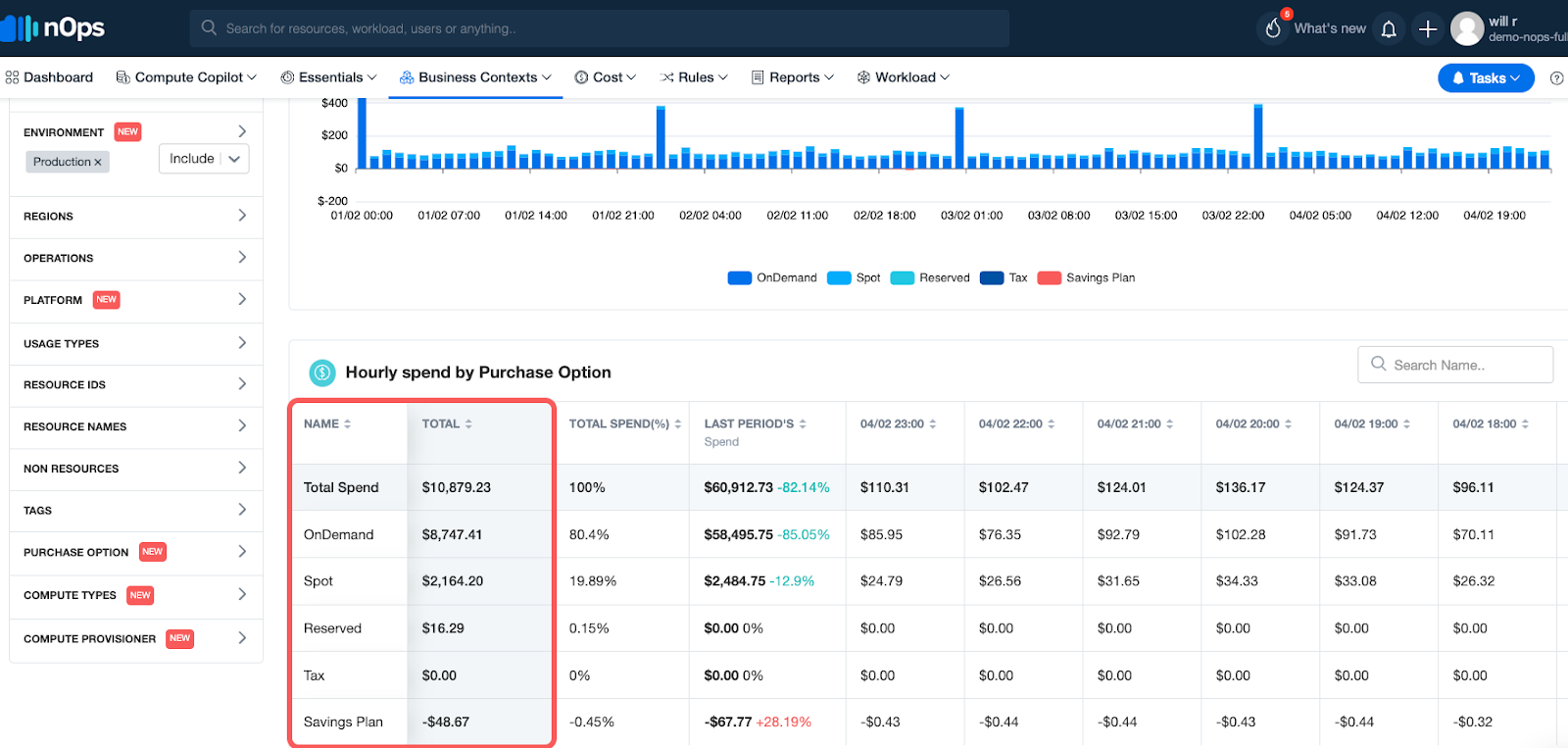

Monitor your AWS S3 Costs with nOps

Whether you’re looking to understand and optimize just your S3 costs or your entire cloud bill, nOps can help. Its free cloud cost management tool, Business Contexts, gives you complete cost visibility and intelligence across your entire AWS infrastructure. Analyze S3 costs by product, feature, team, deployment, environment, or any other dimension.

If your AWS bill is a big mystery, you’re not alone. nOps makes it easy to understand and allocate 100% of your AWS bill, even fixing mistagged and untagged resources for you.

nOps also offers a suite of ML-powered cost optimization features that help cloud users reduce their costs by up to 50% on autopilot, including:

Compute Copilot: automatically selects the optimal compute resource at the most cost-effective price in real time for you — also makes it easy to save with Spot discounts

ShareSave: automatic life-cycle management of your EC2/RDS/EKS commitments with risk-free guarantee

nOps Essentials: set of easy-apply cloud optimization features including EC2 and ASG rightsizing, resource scheduling, idle instance removal, storage optimization, and gp2 to gp3 migration

nOps processes over 2 billion dollars in cloud spend and was recently named #1 in G2’s cloud cost management category.

You can book a demo to find out how nOps can help you start saving on S3 and other AWS costs today.

Demo

AI-Powered Cost Management Platform

Discover how much you can save in just 10 minutes!

Frequently Asked Questions

How much does S3 cost?

S3 Standard storage in the US East (N. Virginia) region costs $0.023 per GB per month, plus charges for PUT, GET, lifecycle transitions, and data transfer. Amazon S3 storage cost varies by storage class, usage pattern, and region. For example, S3 Glacier Deep Archive drops to $0.00099/GB but adds retrieval costs and delays. It’s pay-as-you-go and scales automatically. You’re also billed for metadata requests and features like replication, so accurate forecasting requires full visibility into object counts, access frequency, and lifecycle policies. Use the AWS Pricing Calculator for estimates, or tools like nOps to track actual usage.

Is the S3 AWS free?

Amazon S3 pricing is not free beyond the Free Tier. AWS offers 5 GB of S3 Standard storage with 20,000 GET and 2,000 PUT requests per month under its 12-month Free Tier. After that, S3 is fully pay-as-you-go. There’s no always-free storage option like Cloudflare R2 or Firebase, and you’ll start accruing charges for storage, API calls, and data transfer out. Note: S3 doesn’t charge for data transfer into AWS, but outbound traffic (even to CloudFront) is billed. Also, if you’re using S3 with other AWS services (e.g., Athena or Glue), those services may generate extra charges.

What is the cheapest S3 storage class?

When it comes to Amazon S3 storage pricing, S3 Glacier Deep Archive is the cheapest option at $0.00099/GB/month, designed for long-term, infrequently accessed data. It’s 23x cheaper than S3 Standard, but retrieval can take 12–48 hours unless you pay extra for expedited access. It’s ideal for compliance archives, backups, or anything you rarely (if ever) need to access. S3 Intelligent-Tiering is a good second choice—it moves objects automatically between tiers and costs as little as $0.004/GB in the Archive tier, with sub-second access when stored in the Frequent or Infrequent tiers.

Is S3 cheaper than a database?

It depends on the use case. For storing static files, backups, logs, or large binary objects (e.g., images, videos, models), AWS S3 costs are significantly cheaper than traditional databases. Storing 1 TB in S3 Standard costs ~$23/month; the same in RDS (storage + compute + IOPS) could cost hundreds. But S3 lacks query features—you can’t filter or search without using services like Athena or Redshift Spectrum, which add cost. If you need indexes, transactions, or low-latency queries, a database is worth the extra cost. For raw storage, though, S3 wins every time.

Last Updated: February 9, 2026, AWS Pricing and Services

Last Updated: February 9, 2026, AWS Pricing and Services