- Blog

- EKS Optimization

- K0s Vs. K3s Vs. K8s: The Differences And Use Cases

K0s Vs. K3s Vs. K8s: The Differences And Use Cases

Kubernetes (K8s) is a powerful container orchestration platform that is becoming increasingly popular in cloud computing. It is used to automate the deployment, scaling, and management of applications in clusters of containers. With Kubernetes, organizations can manage their applications with greater efficiency and reliability.

However, you might have also heard of the terms K0s and K3s in the Kubernetes ecosystem. K3s is a lightweight, easy-to-deploy version of Kubernetes (K8s) optimized for resource-constrained environments and simpler use cases, while K8s is a full-featured, highly scalable platform suited for complex, large-scale applications.

In this article, we will explore the key differences between these three popular Kubernetes distributions.

What is K8s?

Image credits: Kubernetes documentation

K8s is the most popular container orchestration platform and the standard for modern containerized applications. It is open source and can be used by any organization, regardless of size. It is scalable, flexible, and extensible and can be used for both on-premise and cloud deployments.

K8s was designed to scale up massively while still being lightweight enough to run on a laptop computer. It is also scalable, extensible, and fault-tolerant, which makes it suitable for enterprise-level app deployment and management. K8s is designed to work in distributed systems and can be deployed on-premise or in the cloud.

K8s as a General-Purpose Container Orchestrator

Kubernetes, or K8s, is a general-purpose container orchestration platform. It allows users to deploy and manage applications in various environments, including on-premise and cloud-based. This provides flexibility and scalability for applications running in containers.

K8s takes care of the heavy lifting, such as scheduling, scaling, and service discovery. It also provides support for deploying services across multiple nodes and clusters. Furthermore, K8s offers features like rolling updates, self-healing, and auto-scaling, which makes it an ideal choice for production workloads.

Unlocking Container Cost Allocation: The Essential Guide

What is K3s?

Image credits: K3S documentation

K3s is a hybrid container orchestration platform that developed by CoreOS. It is a lightweight platform that can deploy and run containers in any environment. It is designed to run on bare metal, virtual machines, or on top of other cloud platforms like AWS and GCP.

Therefore, K3s is best suited for organizations that want to run their infrastructure while still being able to use the advantages of containers. For example, let’s say that you want to use containers to run your apps, but you also want to ensure that you have total control over the infrastructure being used. In that case, using K3s can be a good choice because it allows you to run containers on top of your infrastructure.

K3s as an official CNCF Sandbox Project

K3s is an official CNCF sandbox project launched by Rancher on August 19, 2020, that delivers a lightweight yet powerful certified Kubernetes distribution designed for production workloads across various infrastructures. It’s the perfect choice for organizations looking to reduce the complexity of their Kubernetes set-up while having access to the latest features and updates.

K3s is designed to run on both cloud and on-premises environments, making it an excellent solution for both small and large-scale deployments. It also offers seamless integration with existing storage and network infrastructure, making it an ideal choice for organizations looking to get up and running with Kubernetes quickly. In addition, K3s has been certified by the Cloud Native Computing Foundation (CNCF) as a sandbox project, which ensures reliability and security.

What are the primary differences between K3s and K8s?

The primary differences between K3s Vs. K8s is that K3s is a lightweight, easy-to-use version of Kubernetes designed for resource-constrained environments, while K8s is a more feature-rich and robust container orchestration tool. Additionally, K3s is ideal for edge computing and IoT applications, while K8s is better suited for large-scale production deployments.

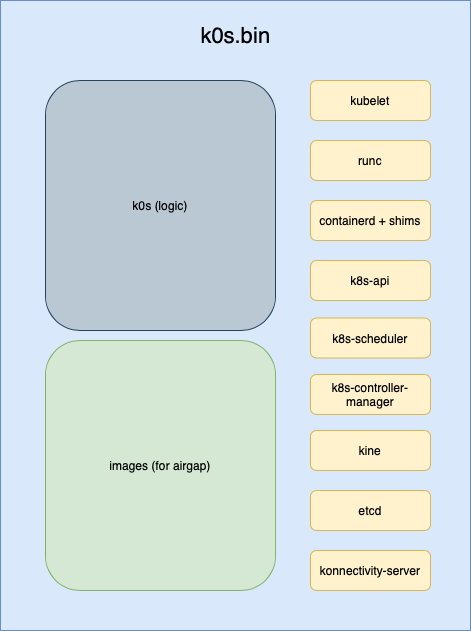

What is K0s?

Image credits: K0s documentation

K0s is a container native platform based on distributed systems, such as Apache Kafka and Apache Mesos. It has a strong focus on stream processing and data-driven applications. With a distributed architecture featuring high fault tolerance and scalability, this platform ensures robust performance and availability.

K0s was developed by Google and is used in many of its products, such as Google Ads and Google Photos. This container-native platform was engineered to efficiently run containerized applications in a distributed computing environment. It can manage millions of containers and provide a reliable and scalable platform to handle enterprise-level workloads.

K0s vs K3s

K0s is a lightweight and secure Kubernetes distribution that runs on bare-metal and edge-computing environments. It is the most recent project from Rancher Labs and is designed to provide an alternative to k3s.

While both k3s and k0s are designed to be lightweight, k0s has several advantages over k3s. These include its single binary design, allowing faster deployment and less resource consumption. Additionally, k0s has of a built-in dashboard for monitoring and managing workloads. Furthermore, k0s offers a variety of advanced features, such as in-cluster database and storage support, specialized workload support for serverless functions and IoT applications, and a variety of deployment-related capabilities such as monitoring, authentication, authorization and more. Thus, k0s provides a great alternative to k3s if you are looking for an even more lightweight and secure Kubernetes distribution.

K0s vs K3s vs K8s: What are the differences?

K0s, K3s, and K8s are three different orchestration systems used to deploy and manage containers. While all three of these systems have their strengths and weaknesses, their functionalities are very similar, making it difficult to choose between them. With that in mind, here are the critical differences between K0s, K3s, and K8s:

K0s is a container native platform that is based on distributed systems, such as Apache Kafka and Apache Mesos. It has a strong focus on stream processing and data-driven applications. It works with a distributed architecture with a high fault tolerance and scalability level. K0s was developed by Google and is used in many of its products, such as Google Ads and Google Photos.

K3s is a hybrid system developed by CoreOS. It is a lightweight platform that can deploy and run containers in any environment. It is designed to run on bare metal, virtual machines or top of other cloud platforms like Amazon Web Services (AWS) and Google Cloud Platform (GCP). K3s is best suited for organizations that want to run their own infrastructure while still being able to benefit from the advantages of containers.

K8s is the most popular container orchestration platform and the standard for modern containerized applications. It is open source and can be used by any organization, regardless of size. It is scalable, flexible, and extensible and can be used for both on-premise and cloud deployments.

The choice between K0s, K3s and K8s depends on the user’s specific requirements. K3s is an excellent choice for small-scale deployments as it requires minimal resources and provides a full-fledged Kubernetes experience. On the other hand, for larger-scale deployments and more complex projects, K8s is the ideal option as a general-purpose orchestrator with powerful features. Finally, K0s might be the best choice when simplicity is most important, as it provides a more straightforward deployment process, consumes fewer resources than K3s, and offers fewer features than K8s. Depending on the user’s needs and preferences, each option provides unique advantages for running Kubernetes.

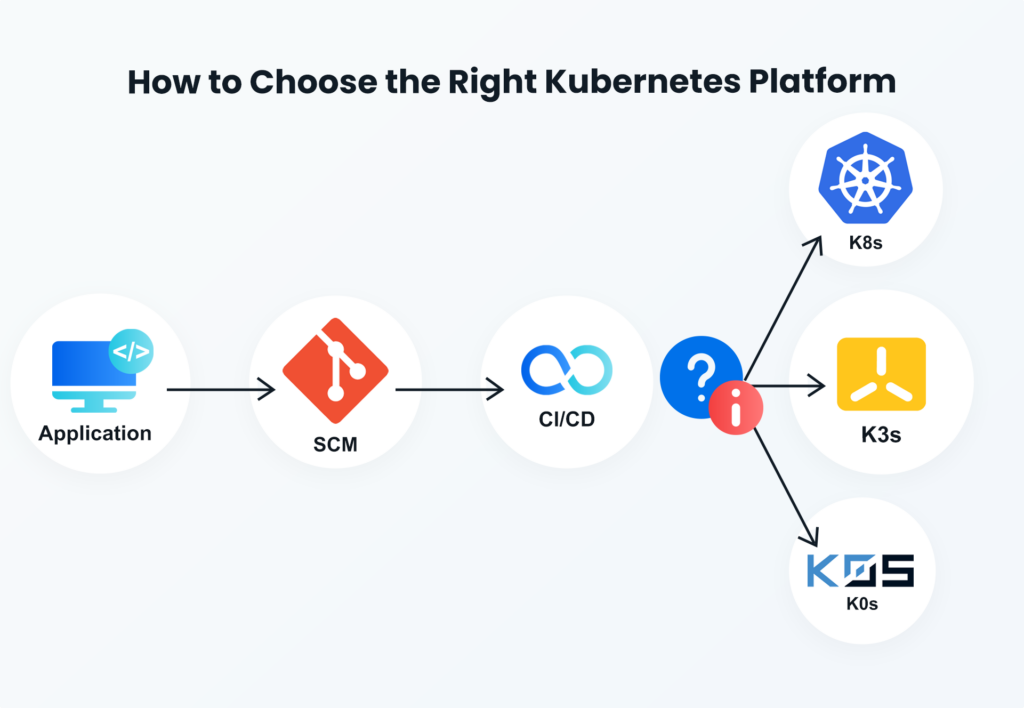

How to Choose the Right Kubernetes Platform

K0s, K3s, and K8s are all powerful container orchestration platforms with their own unique features and benefits. Before choosing one of these three platforms, you should ask yourself a few key questions.

Here’s a quick overview:

– What are your needs as an organization and application owner?

– What are your current infrastructure and deployment requirements?

– What is your budget for this project?

Considering these questions will make it easier to choose the right platform for your organization.

Let’s take a closer look at these questions and discuss how each platform stacks up against the other.

-

- What are your needs as an organization and application owner?

K0s, K3s, and K8s all provide similar functionality, so the main difference is in their design, deployment, and installation. You have to decide which one works best for you. For example, if you’re already running containers but want to optimize them, K0s might be a good choice. If you want to create a new cluster, K8s might be a better option. Meanwhile, K3s might be a good option for existing infrastructure. When choosing a platform, you also need to consider your team’s skill set and existing knowledge.

-

- What are your current infrastructure and deployment requirements?

The requirements of your deployment environment are another critical consideration when choosing between K0s, K3s, and K8s. If you’re already running containers, you need to decide which platform best suits your current infrastructure. For example, if you’re already running on GCP, K8s is likely the best choice. If you’re running on bare metal or a virtual machine, K3s is a better option. This also applies to your existing architecture. If you’re running a hybrid architecture or a cloud-based hybrid architecture, K3s is likely the best choice for you. If you’re running an all-cloud architecture, K8s is your best option.

-

- What is your budget for this project?

The final consideration when choosing a platform is your budget. All three platforms are open-source and free to use, but K0s is only viable for organizations with the necessary skills and resources to run it. K3s is the most cost-effective option and is best for organizations that are still in the process of transitioning to containers.

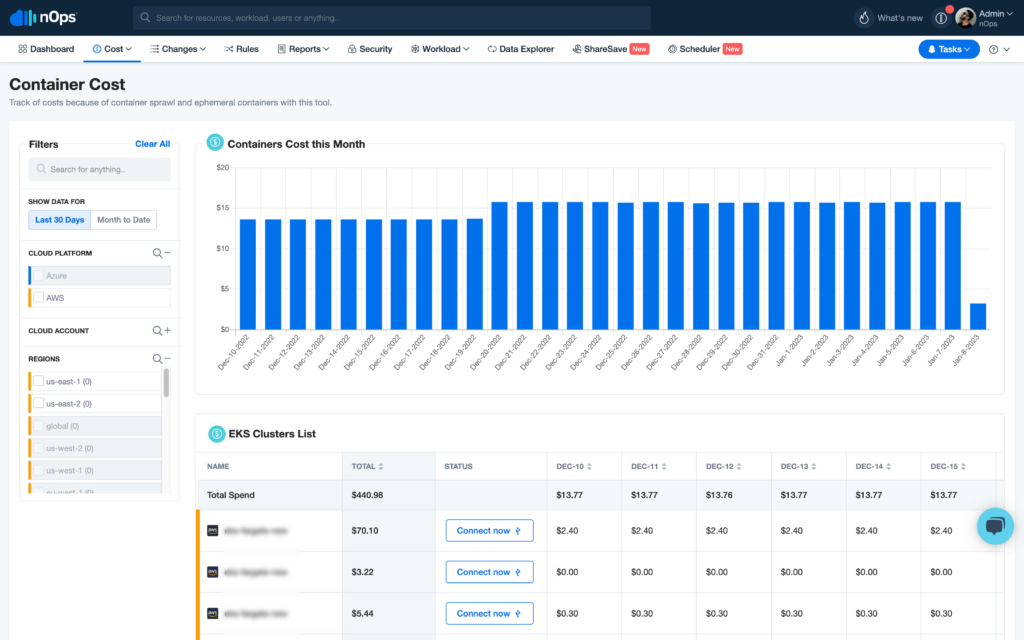

nOps For Any Kubernetes Platform

No matter which Kubernetes platform you pick, cloud cost management is one of the biggest challenges for high-growth companies these days.

nOps Compute Copilot constantly manages the scheduling and scaling of your EKS workloads for the best price and stability. Here are a few of the benefits:

- Optimize your RI, SP and Spot automatically for 50%+ savings — Copilot analyzes your organizational usage and market pricing to ensure you’re always on the best options.

- Reliably run business-critical workloads on Spot. Our ML model predicts Spot terminations 60 minutes in advance.

- All-in-one solution — get all the essential cloud cost optimization features (cost allocation, reporting, scheduling, rightsizing, & more)

Copilot is entrusted with over one billion dollars of cloud spend. Join our satisfied customers who recently named us #1 in G2’s cloud cost management category by booking a demo today.

Last Updated: September 16, 2025, EKS Optimization

Tags

Last Updated: September 16, 2025, EKS Optimization