- Blog

- Announcement

- nOps Achieves AWS GenAI Competency

nOps Achieves AWS GenAI Competency

We’re proud to announce that nOps has achieved the Amaon Web Services (AWS) Generative AI Competency, adding another designation to our growing portfolio of AWS recognitions. This accomplishment reflects our technical expertise in delivering secure, scalable, and production-ready generative AI solutions on AWS.

As organizations race to adopt GenAI, the challenge isn’t just choosing a model—it’s ensuring the solutions they deploy are reliable, cost-efficient, and built with the right architectural foundations. The rapid growth of GenAI providers makes it difficult for customers to know which partners truly have the AWS expertise to guide adoption responsibly and deliver measurable outcomes.

That’s why the AWS Generative AI Competency matters. It serves as a rigorous, third-party validation of a partner’s ability to design, implement, and operationalize GenAI solutions using technical proficiency and proven AWS best practices.

In this blog, we’ll break down what the AWS GenAI Competency means, how it can help you evaluate potential solutions, and what to consider as you navigate the GenAI landscape.

Why does the AWS Generative AI Competency matter?

According to AWS, the Generative AI competency is an:

AWS Specialization that helps Amazon Web Services (AWS) customers more quickly adopt generative AI solutions and strategically position themselves for the future. AWS Generative AI Competency Partners provide a full range of services, tools, and infrastructure—with tailored solutions in areas like security, applications, and integrations to give customers flexibility and choice across models and technologies.

“Partners play an important role in supporting AWS customers leveraging our comprehensive suite of generative AI services. We are excited to recognize and highlight partners with proven customer success with generative AI on AWS through the AWS Generative AI Competency, making it easier for our customers to find and identify the right partners to support their unique needs.” ~ Swami Sivasubramanian, Vice President of Database, Analytics and ML, AWS

Benefits of working with a Generative AI AWS Partner

AWS Generative AI partners can help with:

GenAI Strategy & Readiness Validation

To achieve the GenAI Competency, partners must demonstrate the ability to assess an organization’s readiness for generative AI—evaluating business goals, data posture, architectural maturity, and operational constraints.

nOps helps teams validate their GenAI readiness through our GenAI Model Assessment, which analyzes current Large Language Models (LLM) usage and identifies opportunities to migrate to more cost-effective and higher-performing models on Amazon Bedrock. Organizations gain clarity on which workloads are viable for migration, projected savings, potential quality impact, and the architectural considerations needed to operationalize GenAI at scale.

Once the assessment is complete, nOps can share these findings with your AWS account team or implementation partner, giving them the data they need to validate the use case and plan a migration path aligned to your technical and business goals.

Expertise in Building & Operationalizing GenAI on Amazon Web Services

Competency partners must show proven capabilities in designing, deploying, and operating GenAI applications using AWS services such as Amazon Bedrock, Amazon SageMaker, and AWS-native orchestration patterns.

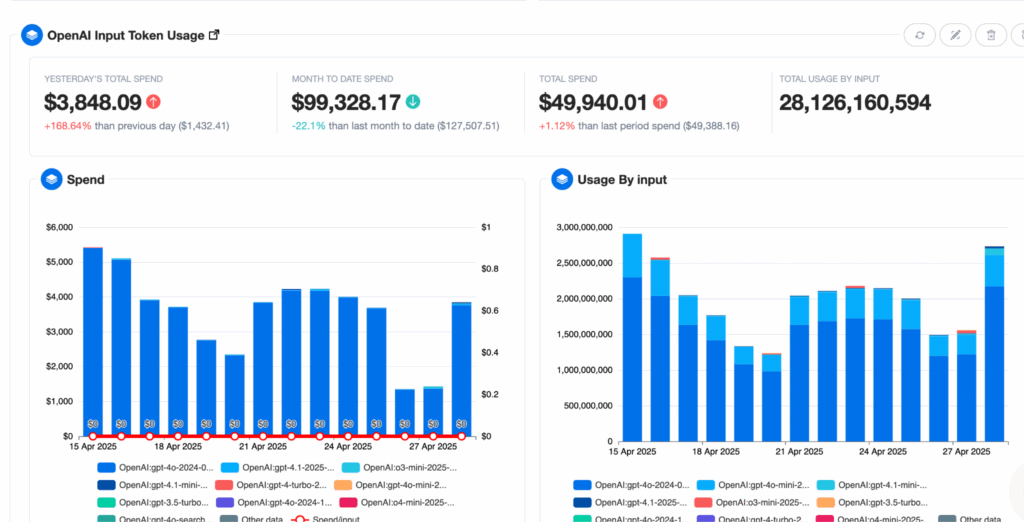

nOps gives teams the visibility they need to confidently build and run GenAI workloads in production. With unified insights into token usage, performance trends, latency behavior, cost drivers, and workload patterns, engineering teams can quickly understand how their applications behave in real environments. This includes full GenAI cost visibility—token breakdowns, model-by-model cost attribution, project-level usage, and forecasting—to help teams validate architecture decisions and keep GenAI spend predictable as workloads scale. This helps teams identify bottlenecks, validate architectural decisions, and ensure that GenAI applications scale reliably on AWS—reducing guesswork and accelerating delivery.

Foundation Model Selection & Cost Optimization

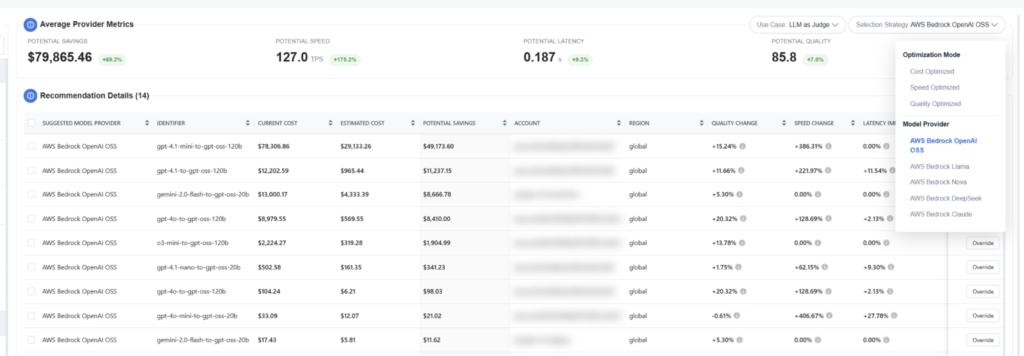

AWS requires partners to demonstrate systematic model evaluation—benchmarking cost, performance, accuracy, context window, and workload fit.

With AI model recommendations, nOps helps you compare your current LLM usage (OpenAI, Gemini, Claude, DeepSeek, etc.) against Bedrock alternatives. Because nOps provides unified views across all major LLM providers, customers can benchmark models side-by-side across cost, latency, quality, and efficiency before choosing a foundation model. We provide cost-reduction recommendations, quality and latency comparisons, MMLU-based scoring across models, and guidance on which workloads to migrate. This makes it easy to identify equivalent or better-performing Bedrock models that can reduce GenAI costs by up to 80%.

Security, Privacy & Compliance Alignment with Responsible AI Practices

Competency partners must demonstrate strong controls around data security, privacy management, and responsible handling of AI-powered workloads. AWS requires proof that partners follow responsible AI practices with rigorous technical validation, including risk assessment, transparency, bias mitigation, and explainability.

nOps aligns with AWS security frameworks, ensuring that Bedrock migrations and GenAI workloads meet AWS standards for isolation, encryption, and least-privilege access.

It also helps customers evaluate model output patterns, cost anomalies, and quality scores, giving teams transparency into how their AI behaves over time. Our benchmarking and scoring tools support responsible decision-making for Generative AI applications by making it easy to compare accuracy, data risk, and performance across models.

Ongoing Optimization & Support for AI Workloads

Competency partners must offer guidance that continues beyond initial deployment—ensuring AI workloads and Generative AI initiatives evolve, costs remain controlled, and performance stays consistent.

As part of ongoing support, nOps provides GenAI budgeting and cost governance features—budgets, alerts, cost anomaly detection, and other guardrails —to ensure workloads remain optimized long after migration.

AWS GenAI Customer Success Stories

Check out these two real world examples of how nOps helps organizations with implementing Generative AI solutions on AWS.

Enter Cuts Generative AI Costs and Gains Full AI Spend Visibility with nOps

Enter, a fast-growing developer tools startup backed by Sequoia, needed a better way to track rapidly increasing GenAI spend across OpenAI and AWS. Without a dedicated FinOps team, they struggled to understand credit burn, forecast usage, and allocate costs across teams and projects.

With nOps, Enter gained full visibility into token usage, credit burndown, and per-project Generative AI costs. nOps also enabled OpenAI cost forecasting, workload-aware cost allocation, and EKS optimization to reduce container waste. Finance and engineering now share a unified view of cloud and GenAI spend, backed by real-time dashboards that surface savings opportunities.

Rose Rocket Improves Generative AI Model Efficiency and Reduces Cost with nOps

Rose Rocket, a leader in transportation management software, expanded Generative AI technologies across OpenAI and AWS Bedrock but lacked visibility into latency, token efficiency, and real model performance. They needed a clearer way to benchmark providers and choose the best model for cost and experience.

nOps used its AI Model Recommendation engine to compare OpenAI, Bedrock Claude, DeepSeek, Llama, and Nova Generative AI Offerings across latency, cost, and quality (via MMLU). The analysis revealed opportunities to help Rose Rocket reduce costs by up to 30% and cut latency by as much as 40%, enabling Rose Rocket to make data-driven decisions instead of treating GenAI as a black box.

“nOps has provided Rose Rocket with a clear way to compare GenAI models side by side, showing the differences in cost and efficiency. It helped us spot where we could save money and make more informed decisions about which models and providers to use.”

— Jeff Webb, Senior Engineering Leader, Rose Rocket

The Bottom Line

Earning the AWS Generative AI Competency validates what our hundreds of AWS customers already know: nOps helps organizations fuel innovation on Generative AI technologies — faster, safer, and at a lower cost.

Whether you’re migrating to AWS, modernizing workloads, or keeping Generative AI spend under control, nOps is ready to accelerate your journey.

Ready to see it live?

Start a free trial on AWS Marketplace — time-to-value is under 30 minutes.

nOps is exclusively available through the AWS Marketplace. It manages $3 billion in AWS spend and is rated 5 stars on G2.